The Limits of Traditional DOE: What R&D Leaders in Pharmaceuticals Need to Know

While traditional DOE remains a valuable tool, modern pharmaceutical development increasingly pushes beyond the conditions where classical workflows perform best.

Key Takeaways:

- Traditional DOE’s statistical assumptions frequently break down in pharmaceutical formulation and process development, producing outputs that look valid while the underlying model is wrong.

- Classical designs scale exponentially: at 15 factors, a full factorial requires 32,768 runs. No fractional design fully resolves the resolution loss that comes with high dimensionality.

- When experimental runs cost $50,000–$500,000 each, the sample size requirements of traditional DOE become a strategic constraint rather than a purely statistical one.

- Traditional DOE is structurally incapable of identifying optima outside the bounds set before the first run.

- Traditional tools often require manual configuration and deep statistical expertise, leading scientists to spend more time designing experiments than actually running them.

Why Traditional DOE Became the Standard and Why That’s Increasingly Insufficient

Since Box and Wilson formalized response surface methodology in the 1950s, traditional DOE has given R&D teams a structured and statistically rigorous way to isolate cause from correlation. It replaced one-factor-at-a-time guesswork with systematic exploration. For decades, that was sufficient.

Traditional DOE works, but within a defined set of conditions. The problem is that those conditions no longer match the problems most pharma R&D organizations actually face.

Three shifts have widened that gap. First, dimensionality has exploded. Multi-component formulations, biologics, and complex process systems routinely involve 20+ interacting variables: temperature profiles, solvent composition, catalyst loading, pH, agitation speed, feed rates, and more. Second, iteration cycles have compressed. Leadership expects faster decisions with fewer experiments. Third, and this is rarely said aloud, the ambition has changed. Organizations aren’t only optimizing existing products; they’re working in adjacent or entirely new design spaces where prior knowledge is limited and data is sparse.

Five Structural Limitations of Traditional DOE That R&D Leaders Underestimate

These aren’t edge cases. They’re inherent features of classical DOE workflows that become liabilities under conditions that are increasingly common in pharmaceutical development. These limitations do not make DOE obsolete. Rather, they highlight where classical DOE workflows struggle when applied to modern, high-dimensional pharmaceutical problems.

1. The Assumption Stack: Linearity, Independence, and Normality Rarely Hold

Every classical DOE rests on a set of statistical assumptions: that the response surface is reasonably linear or low-order polynomial, that factors are independent or interact in predictable ways, and that residuals are normally distributed with constant variance.

In pharmaceutical formulation and process development, these assumptions break more often than teams acknowledge. Excipient interactions are frequently nonlinear and path-dependent. API-excipient stability responses don’t follow tidy second-order models. Traditional DOE will still generate p-values, main effect plots, and contour maps, but if the model assumptions are violated, those outputs may not accurately represent the underlying system. When they don’t, you’re optimizing a model of your system, not your actual system.

2. The Curse of Dimensionality: When Factor Spaces Exceed Traditional DOE’s Practical Reach

In many pharmaceutical formulation studies, researchers need to evaluate 15–20 variables including excipient types, concentrations, temperature profiles, and mixing parameters. At 15 factors, a full factorial design requires 32,768 runs. Fractional factorials exist precisely because full factorials become impractical fast.

But fractional designs buy tractability by confounding higher-order interactions. For well-characterized systems with 6–8 factors, that trade-off is usually acceptable. For high-dimensional formulation problems where 15, 20, or more factors are in play, even aggressive screening designs struggle to deliver actionable resolution. As dimensionality increases, the statistical efficiency of traditional DOE declines sharply, making it difficult to capture meaningful insights without dramatically increasing the number of experiments.

3. The Cost-Per-Run Problem: When Each Experiment Costs $50K+

In pharmaceutical R&D, where a single run may involve synthesizing a novel compound, running a multi-week stability study, or executing a bioreactor process, each experiment can cost tens of thousands to hundreds of thousands of dollars. Under those constraints, a classical fractional factorial with center points and replicates can consume an entire quarterly budget before you’ve learned whether the design space was even the right one to explore.

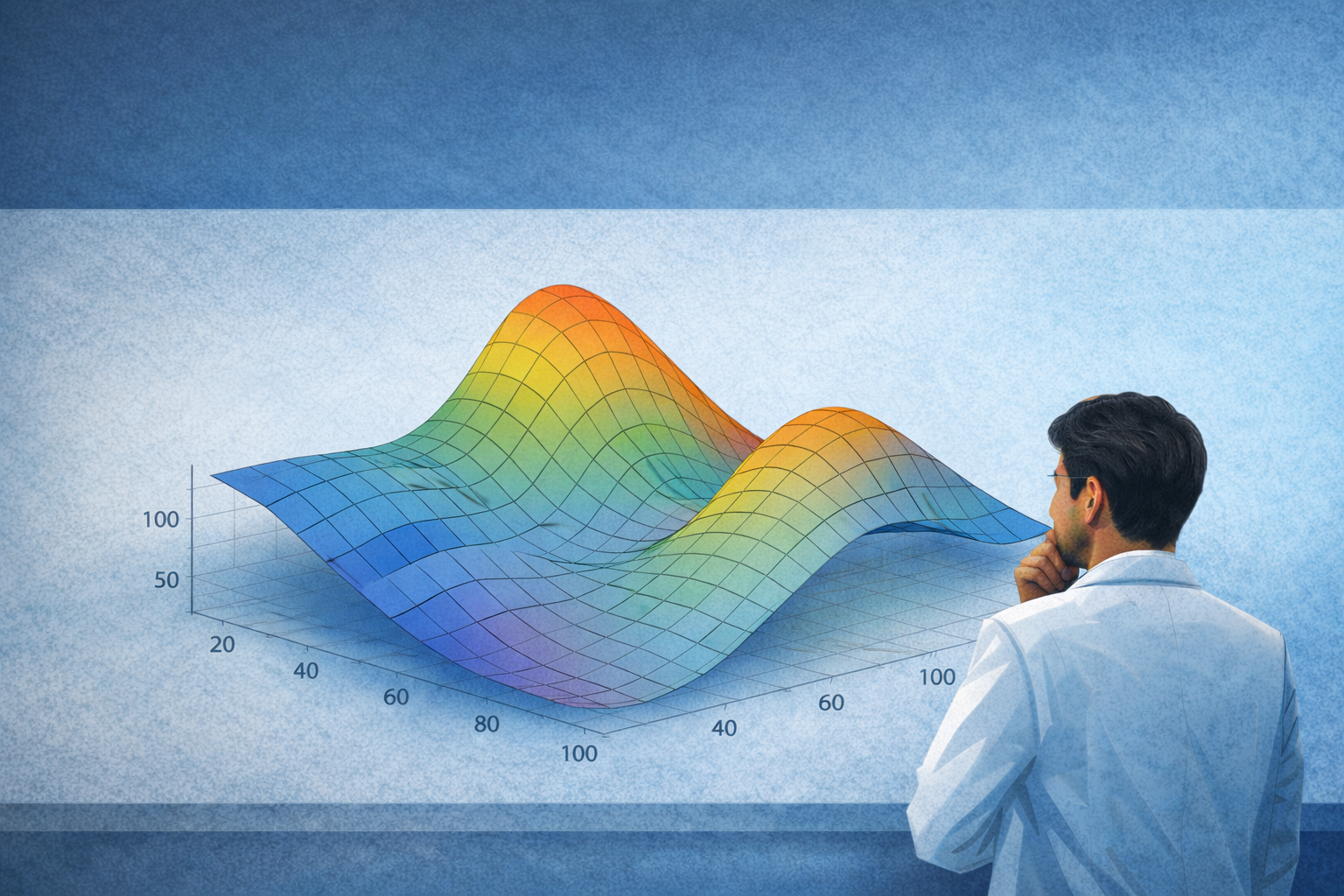

4. The Optimization Trap: Traditional DOE Finds Local Peaks

Traditional DOE operates within a design space defined before the first run. You choose the factors, set the levels, and bound the region. Response surface methodology fits a local polynomial model and walks toward a local optimum, which is exactly what you want for incremental improvement of an existing formulation or process.

But if the real opportunity lies outside your predefined factor ranges, or involves factors you didn’t include, traditional DOE cannot surface if the true optimum lies outside the initial design space. It will produce a polished contour plot that makes it look like you’ve found the best answer available. Organizations that apply classical DOE workflows by default to every development question systematically bias their work toward local optima, and traditional DOE will never tell them what they missed.

5. Methodological Lock-In: When the Tool Shapes the Problem

When a team has invested heavily in traditional DOE training, software, and workflows, every experimental question starts looking like a factorial design. Internal standards and conventional tools reinforce this default through manual configuration requirements and steep learning curves, making it difficult to adopt alternative methodologies even when the problem calls for it.

The opportunity cost isn’t the DOE itself. It’s the time lost before anyone asks whether it was the right tool. If your teams lack a decision framework for selecting experimental methodology, they will default to what they know. That’s a leadership problem, not a practitioner problem.

When Traditional DOE Is Still the Right Call

None of this means traditional DOE should be sidelined. This means it should be chosen deliberately.

Classical DOE is likely your best option when factor count is manageable (roughly 10 or fewer primary factors), cost per run is low to moderate, you have reasonable confidence in low-order interactions, and your goal is optimization within a known, bounded space. It also retains a strong argument for regulatory filings: DOE has decades of precedent in pharmaceutical manufacturing, and that established trust is a legitimate reason to use it even when alternative methods might offer efficiency gains.

When those conditions don’t hold, different tools are needed. A useful way to think about methodology selection is across two dimensions: how well you understand the design space, and how much each experiment costs. For many regulatory and quality-driven workflows, traditional DOE remains an important and trusted methodology.

When cost per run is low and the design space is known, traditional DOE is in its sweet spot. Factor count is manageable, responses can be modeled with polynomial fits, and fractional designs retain resolution.

When cost per run is low but the design space is unknown, start with Bayesian optimization to identify promising regions efficiently, then run a smaller, targeted DOE to characterize and validate what you find.

When cost per run is high and the design space is known, Bayesian optimization or sequential model-based approaches are the better fit. Every run must maximize information gain, and pre-set factorial grids are too inflexible to meet that standard.

When cost per run is high and the design space is unknown, Bayesian optimization, active learning, or ML-augmented experimentation are required. Traditional DOE alone is insufficient.

If your R&D portfolio sits primarily in that last scenario and your teams are defaulting to traditional DOE, you have a strategic methodology mismatch.

A More Efficient Path Forward

The limitations above are not an argument against DOE. Instead, they highlight the need for a broader experimental strategy, one where classical statistical approaches and machine learning-driven experimentation work together rather than in isolation.

Bayesian optimization and AI-guided experiment selection extend what traditional DOE can do, enabling efficient exploration of high-dimensional experimental spaces and identifying optimal conditions with far fewer experiments. Sunthetics is built specifically for this. We recognize that scientists still rely on DOE as a foundational experimental framework. Our platform includes a simplified DOE module designed to remove the configuration complexity associated with traditional tools, allowing researchers to explore experimental spaces more easily, while also enabling Bayesian optimization when the problem demands it, with no ML expertise required.

If you’re not sure whether your experimental strategy is aligned with the problems your teams are actually solving, speak with a Sunthetics expert.

Talk to an Expert Today

References

Box, G.E.P. & Wilson, K.B. (1951). On the Experimental Attainment of Optimum Conditions. Journal of the Royal Statistical Society, Series B.

Montgomery, D.C. (2017). Design and Analysis of Experiments, 9th Edition. Wiley.

Jones, B. & Nachtsheim, C.J. (2011). A Class of Three-Level Designs for Definitive Screening. Journal of Quality Technology.